| Welcome, Guest |

You have to register before you can post on our site.

|

| Latest Threads |

A One-Page Ledger Method ...

Forum: Analysis of the text

Last Post: Jorge_Stolfi

2 minutes ago

» Replies: 112

» Views: 4,546

|

Who is even still working...

Forum: Theories & Solutions

Last Post: Antonio García Jiménez

1 hour ago

» Replies: 18

» Views: 486

|

Why and how the text coul...

Forum: Theories & Solutions

Last Post: Grove

1 hour ago

» Replies: 225

» Views: 25,912

|

Rosettes castle and Rocca...

Forum: Imagery

Last Post: Mark Knowles

4 hours ago

» Replies: 4

» Views: 144

|

9 Radial Fully Mapped to ...

Forum: Astrology & Astronomy

Last Post: Mark Knowles

Yesterday, 06:44 PM

» Replies: 3

» Views: 573

|

The Voynich as a rhythmic...

Forum: Analysis of the text

Last Post: nintus

Yesterday, 10:32 AM

» Replies: 11

» Views: 1,079

|

Ruby's Greek Thread

Forum: Theories & Solutions

Last Post: Ruby Novacna

Yesterday, 09:24 AM

» Replies: 350

» Views: 86,054

|

Starred Paragraphs are Py...

Forum: Theories & Solutions

Last Post: JoJo_Jost

Yesterday, 07:33 AM

» Replies: 9

» Views: 320

|

Voynich is encrypted ENOC...

Forum: Theories & Solutions

Last Post: Radim Dobeš

Yesterday, 06:53 AM

» Replies: 121

» Views: 14,485

|

The 'Chinese' Theory: Fo...

Forum: Theories & Solutions

Last Post: JoeyB

Yesterday, 06:02 AM

» Replies: 535

» Views: 132,191

|

|

|

| The VMS ink is NOT iron-gall |

|

Posted by: Jorge_Stolfi - 23-04-2026, 03:53 PM - Forum: Physical material

- Replies (50)

|

|

The McCrone report stated on page 3 "In all probability", the inks used for text and drawing were iron gall inks."

But that is not correct. The instruments described in the report cannot positively identify iron-gall ink (IGI). The X-ray diffraction spectrum can identify particles of crystalline mineral pigments, like azurite, minium, hematite. For substances that are not crystalline -- which is the case of IGI -- they can only tell which chemical elements they contain, but not the substances -- how those elements are combined.

Those instruments only determined that the ink contains iron. It was natural to conclude that it was IGI, because 99.99% of the iron-containing dark ink on vellum documents and manuscripts is IGI, and it does not make sense to write on vellum with any other black ink. People would use vellum when they wanted the document or book to last for centuries, and resist rubbing, humidity, spills; and to make the text relatively tamper-proof. Only IGI would achieve that goal.

But, unfortunately, the VMS is not a "99.99% manuscript". It is a "0.01%" one. And that has led too many experts astray...

In particular the VMS text ink is definitely not IGI.

For one thing, unlike IGI, the VMS ink will come off easily and completely with water. That is clearly visible on the ultraviolet images of f116v, or inside the "ketchup" stain of f103r. In fact it seems to come off even just by rubbing.

But the real evidence is how it looks under infrared. According to You are not allowed to view links. Register or Login to view.:

Quote:According to recent reflectance measurements, iron gall inks absorb IR radiation up to 1200 nm [23] (p. 58) (Additional file 1: Suppl 2),Footnote3 while ochre already become transparent at 850 nm [25] (p. 16). An iron gall ink underdrawing could be thus determined, if the underdrawing lines absorb radiation up to 1200 nm and become invisible in higher wavelengths

And here are clips from the infrared images of You are not allowed to view links. Register or Login to view. under light of various wavelengths:

650 nm (red):

700 nm (infrared):

940 nm (infrared):

Thus the text ink on that page becomes transparent and invisible between 700 and 940 nm. It is not IGI, but probably some iron-containing brown mineral pigment like sienna.

The report itself admits that the quire numbers (Sample 19) did not show significant iron contents. Which is not strange: quire numbers, unlike the folio numbers, were only temporary instructions to the bookbinder; and thus were probably made with some other ink, like lampblack (india) ink -- with is much easier to make than IGI, lasts indefinitely in a closed bottle, and is better than IGI for writing on paper.

Another common mis-interpretation of that report is the claim that they determined that all inks and pigments were original from the 1400s.

First, as the report admits, they failed to identify many of the pigments, including the text ink and the green paint that is used on the leaves of most of the plants. (They only determined that it contained copper but was not crystalline, and guessed that it could be some unidentified organic salt of copper.) And they mis-identified others, like a red pigment as "palmierite" (which is an extremely rare colorless mineral, found only around fumaroles in volcanic areas.)

And, for those paints that they did identify, they did not not determine any dates.

All they found is that the pigments that they did identify, like azurite (but not that "palmierite") were available and used in the 1400s. That is, none of the pigments that they could identify was a synthetic pigment that became available only after 1700 (like the titanium white that debunked the Vinland Map).

But all those pigments that they did identify are still available today, and would have been used by a painter or forger at any time before 1911...

All the best, --stolfi

|

|

|

| Testable signatures on VMS structure |

|

Posted by: Labyrinthinesecurity - 23-04-2026, 08:38 AM - Forum: News

- Replies (19)

|

|

Last year, M. Greshko's Naibbe cipher marked a turning point in VMS research. Thanks to his work, and of Zattera's, we are now in a position to make testable criteria about key features a script simulator (or a script generator) like Naibbe must meet to replicate the behavior of the Voynich structure.

This new paper explores and proposes 4 such criteria: You are not allowed to view links. Register or Login to view.

Best regards

|

|

|

| The Guild - Europa 1410 |

|

Posted by: nablator - 20-04-2026, 08:32 PM - Forum: Fiction, Comics, Films & Videos, Games & other Media

- No Replies

|

|

Quote:Forge your path to power in medieval Europe. Choose your profession and master trade, politics, and intrigue to climb the social ladder and found a dynasty. Secure your legacy and dominate history in this immersive economic simulation.

You are not allowed to view links. Register or Login to view.

Quote:Expected to be released on Windows in 2026.

You are not allowed to view links. Register or Login to view.

|

|

|

| Progress Report: Decoding the Voynich Manuscript via the OI-2026 Protocol |

|

Posted by: Keishi Oi - 20-04-2026, 01:55 AM - Forum: The Slop Bucket

- Replies (1)

|

|

Hello everyone,

I am an independent researcher studying the Voynich Manuscript.

I made a post here recently, but it was moved to the trash bin. I was quite disappointed that it was discarded before the contents of my paper were properly reviewed.

However, upon reflection, I realize that my heavy use of modern IT terminology likely made the core concepts difficult to understand. To keep things straightforward, here are my discoveries in plain terms:

I have discovered the absolute "grammar" that constitutes the Voynich Manuscript.

This grammar consists of a strict 4-stage process.

I have determined that the foundational structure of the text is a "17x73" matrix, consisting of "17 basic frames" and "73 components."

For full details, please check the link below. I have made the data open so anyone can verify it themselves:

You are not allowed to view links. Register or Login to view.

Furthermore, below are the work-in-progress results of applying NMF (Non-negative Matrix Factorization) analysis and Cosine Similarity using this identified grammar. (Note: I have translated my Japanese working notes into English for this forum):

1. Original EVA: 9

2. Latin Base: [9: Unverified]

3. Meaning: [9: Unverified]

4. Breakdown:

- 9: [Unverified]

1. Original EVA: hae

2. Latin Base: [h: Unverified] + [a: Unverified] + [LAMENTANS / MEGISTUS / RESTITUANT]

3. Meaning: [h: Unverified] + [a: Unverified] + [lamenting / crying out / great / greatest / restore / recover]

4. Breakdown:

- h: [Unverified]

- a: [Unverified]

- e: [LAMENTANS / MEGISTUS / RESTITUANT]

1. Original EVA: ay

2. Latin Base: [a: Unverified] + [y: Unverified]

3. Meaning: [a: Unverified] + [y: Unverified]

4. Breakdown:

- a: [Unverified]

- y: [Unverified]

1. Original EVA: Akam

2. Latin Base: [A: Unverified] + [k: Unverified] + [a: Unverified] + [m: Unverified]

3. Meaning: [A: Unverified] + [k: Unverified] + [a: Unverified] + [m: Unverified]

4. Breakdown:

- A: [Unverified]

- k: [Unverified]

- a: [Unverified]

- m: [Unverified]

1. Original EVA: 2oe

2. Latin Base: [2: Unverified] + [MENTITI / SPECULARES / PHENGITES] + [LAMENTANS / MEGISTUS / RESTITUANT]

3. Meaning: [2: Unverified] + [false / imitation / transparent / mirror-like / transparent stone / mica, marble] + [lamenting / crying out / great / greatest / restore / recover]

4. Breakdown:

- 2: [Unverified]

- o: [MENTITI / SPECULARES / PHENGITES]

- e: [LAMENTANS / MEGISTUS / RESTITUANT]

1. Original EVA: !oy9

2. Latin Base: [!: Unverified] + [MENTITI / SPECULARES / PHENGITES] + [y: Unverified] + [9: Unverified]

3. Meaning: [!: Unverified] + [false / imitation / transparent / mirror-like / transparent stone / mica, marble] + [y: Unverified] + [9: Unverified]

4. Breakdown:

- !: [Unverified]

- o: [MENTITI / SPECULARES / PHENGITES]

- y: [Unverified]

- 9: [Unverified]

1. Original EVA: ýscs

2. Latin Base: [ý: Unverified] + [s: Unverified] + [FUMANTES / SORBERE / GRACILES] + [s: Unverified]

3. Meaning: [ý: Unverified] + [s: Unverified] + [smoking / vaporizing / absorbing / swallowing / thin / rare] + [s: Unverified]

4. Breakdown:

- ý: [Unverified]

- s: [Unverified]

- c: [FUMANTES / SORBERE / GRACILES]

As this is still an ongoing process, I apologize for the abundance of "Unverified" tags. I firmly believe that by expanding the corpus of literature going forward, a complete decoding of the Voynich manuscript will eventually be achieved.

Any thoughts, feedback, or opinions would be highly appreciated.

For reference, here is the Latin literature corpus used for this specific verification phase:

1. Natural Philosophy (Lucretius: De Rerum Natura)

You are not allowed to view links. Register or Login to view.

You are not allowed to view links. Register or Login to view.

You are not allowed to view links. Register or Login to view.

You are not allowed to view links. Register or Login to view.

You are not allowed to view links. Register or Login to view.

2. Astronomy & Astrology (Hyginus, Manilius, Pliny, Seneca)

You are not allowed to view links. Register or Login to view. (Hyginus: Astronomica, Book 2)

You are not allowed to view links. Register or Login to view. (Hyginus: Astronomica, Book 3)

You are not allowed to view links. Register or Login to view. (Manilius: Astronomica, Book 1)

You are not allowed to view links. Register or Login to view. (Pliny: Naturalis Historia, Book 1)

You are not allowed to view links. Register or Login to view. (Seneca: Naturales Quaestiones, Book 2)

3. Fluid Dynamics, Aqueducts, and Baths (Vitruvius, Seneca)

You are not allowed to view links. Register or Login to view. (Vitruvius: De Architectura, Book 8)

You are not allowed to view links. Register or Login to view. (Seneca: Naturales Quaestiones, Book 4)

You are not allowed to view links. Register or Login to view. (Seneca: Naturales Quaestiones, Book 6)

4. Medicine, Pharmacy, and Botany (Columella, Isidore)

You are not allowed to view links. Register or Login to view. (Columella: De Re Rustica, Book 6)

You are not allowed to view links. Register or Login to view. (Isidore: Etymologiae, Book 4 - Medicine)

You are not allowed to view links. Register or Login to view. (Isidore: Etymologiae, Book 11 - The Human Being)

You are not allowed to view links. Register or Login to view. (Isidore: Etymologiae, Book 13 - The Cosmos and its Parts)

You are not allowed to view links. Register or Login to view. (Isidore: Etymologiae, Book 17 - Agriculture and Botany)

|

|

|

| The first glyph of every line – a hint at a cipher? |

|

Posted by: JoJo_Jost - 19-04-2026, 09:22 AM - Forum: Analysis of the text

- Replies (45)

|

|

The first glyph of every line in the VMS – a statistical anomaly, or perhaps even part of the cipher?

This is a “by-product”  of my statistical investigations in connection with the Bavarian hypothesis. I think this might interest others too, and I’m curious to see if you can verify it, or if it’s perhaps already known (I haven’t read about it yet). I’m posting it in the Text Analysis section because that’s where it belongs. of my statistical investigations in connection with the Bavarian hypothesis. I think this might interest others too, and I’m curious to see if you can verify it, or if it’s perhaps already known (I haven’t read about it yet). I’m posting it in the Text Analysis section because that’s where it belongs.

During the many analyses I’ve carried out, I’ve noticed that the first glyph in a line doesn’t match the translations. The effect is visible across the entire corpus and statistically distinguishes position 1 clearly from all others.

I already knew / suspected this regarding the first glyph on the page; most of you are probably aware of that. But the effect is more widespread.

A brief definition:

Anomaly rate: the proportion of tokens containing at least one internal bigram with a negative PMI – i.e. a pair of characters that occurs together less frequently in the manuscript than would be expected under independent distribution.

(PMI: Pointwise Mutual Information measures how surprising it is that two characters appear next to each other.).

Note

Only lines with at least three tokens are considered (to exclude labels and other elements; interestingly, the result is actually slightly better for lines with more than three letters (not token)).

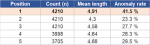

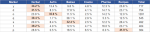

1. For each token at line positions 1 to 5, the average length and the anomaly rate are calculated:

If we group all tokens from position 2 onwards (32,425 words), they have an average length of 4.49 and an anomaly rate of 28.2%. Even so, the first word of each line deviates significantly, with 4.91 and 41.5%.

On average, the first token of each line is 0.6 units longer than the token at position 2, and its internal strings are statistically significant roughly twice as often as at any other position.

2. I was then interested in the question of what happens when the first letter is removed.

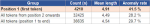

The result: if one removes the first unit of each token, the anomaly rate changes to varying degrees depending on the line position:

At position 1, removing the first unit reduces the anomaly rate by 20 percentage points; at all other positions, by only 7 to 8.

The effect at position 1 is about 2.5 times as strong.

The conspicuous sequence is therefore at the beginning of the token. If one removes precisely the first unit, the remaining word behaves statistically like a normal Voynich word.

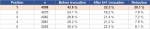

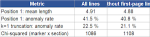

3. "The Magnificent Seven"  There are mainly seven glyphs in the first position. There are mainly seven glyphs in the first position.

Total: 85.5 %

The remaining 14.5 per cent are distributed among rarer units such as ch, sh, k and others.

However, this is not the normal frequency. The overall frequency of these seven units in the corpus is significantly lower.

E.g.: p accounts for 0.84 per cent of all units in the VMS, but 7.2 per cent of all first glyphs in a line – an overrepresentation by a factor of 8.5. For s, the factor is 5.7. The distribution at position 1 therefore does not follow the general frequency of the corpus.

4. I was also interested in whether there are section-specific distributions:

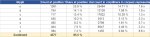

Result: The seven units are unevenly distributed across the sections. Each figure indicates the proportion of lines with this initial marker that appear in the respective section:

Herbal Astro Balneo Cosmo Pharma Recipes

Each unit has its own section distribution. "o" is concentrated in Astro, "q" in Herbal and Balneo, "p" in Recipes (f103 to f116), while p is practically absent in Astro.

If the seven markers were distributed randomly across the sections, a deviation from the measured frequency would be statistically extremely unlikely. A chi-square test confirms what is already evident in the table: each marker has its own section preference.

5. Then I thought to myself, hmm, perhaps the effect is distorted by the first line.

In 72 per cent of cases, the first line of a page begins with a Gallow (t, p, k, f). That is a known fact. However, if one removes these first 227 page lines from the analysis, the key figures hardly change:

The key finding therefore stems from the normal text lines, not from the potentially ‘decorative’ page beginnings.

6. Summary

The first glyph of every line in the Voynich Manuscript exhibits statistical behaviour that differs from all other positions:

a) The first token is longer and statistically more anomalous

b) The effect is limited to the first glyph

c) 85 per cent of the lines begin with one of only seven units

d) The choice of this unit depends significantly on the section

----

Interpretation:

How this finding should be interpreted remains open. Possible directions:

- a calligraphic convention that manifests as a statistical glyph difference?

- a linguistic element (e.g. a clitic prefix) that is mandatory at the start of a line?

- what I believe: a specific hint at part of a cipher functionality. This is supported by the fact that I can already detect changes in the words following the initial letters, particularly p and t, but this is not yet statistically significant. Perhaps I’ll come back to this later.

---

In my opinion, the effect cant be dismissed as an artefact. What do you think?

Do you have any idea what this might be?

I’m interested in comments and, above all, whether this phenomenon has been described before. And, of course, in counter-evidence, hypotheses that might put it into a comprehensible context, etc...

Jojo

PS: It could at least suggest that, when attempting translations, one should try leaving out the first letter as a test.

|

|

|

| Author Leo Mec of MS-408 |

|

Posted by: oeesordy - 19-04-2026, 04:21 AM - Forum: Provenance & history

- Replies (4)

|

|

This will come as a surprise, I have found the authors full name and I believe he was a You are not allowed to view links. Register or Login to view., Mec was found there. His name is You are not allowed to view links. Register or Login to view. You are not allowed to view links. Register or Login to view. in You are not allowed to view links. Register or Login to view.! ckhar shal ckhar shal Mec is abbreviated I surmise. Mec comes from Mecco or Meco.

You can check with my cipher:

You are not allowed to view links. Register or Login to view.

Quote:Mecacci, Mecarelli, Mecarini, Mecarozzi, Mecca, Mecchi, Mecci, Mecco, Meccoli, Mechelli, Mecherini, Mechi, Mechini, Meco, Mecocci, Meconcelli, Meconi, Mecozzi, Mecucci, Mecuzzi

From Meco, a common derivative of the first name Domenico, meaning: sacred to God

You are not allowed to view links. Register or Login to view.

This is symbolic and a metaphor and I don't think he is implying a sabor tooth tiger. It's Leo Mec saying he is speaking in tongues and it's depicting a cat symbolic of Leo with it's tongue sticking out in August.

So circa 1005-6 and Leo Mec of Northern Italy author of MS-408.

I found more details:

Quote:

Meco History, Family Crest & Coats of Arms

Early Origins of the Meco family

The surname Meco was first found in at the foundations of You are not allowed to view links. Register or Login to view. (Italian: Venezia), with the Minotto family.

Early History of the Meco family

This web page shows only a small excerpt of our Meco research. The years 1203, 1265, 1300, 1450, 1550, 1575, 1655, 1722, 1765, 1769, 1808, 1848, 1863, 1864, 1873, 1876, 1881 and 1886 are included under the topic Early Meco History in all our You are not allowed to view links. Register or Login to view. and printed products wherever possible.

Meco Spelling Variations

Enormous variation in spelling and form characterizes those Italian names that originated in the medieval era. This is caused by two main factors: regional tradition, and inaccuracies in the recording process. Before the last few You are not allowed to view links. Register or Login to view. years, scribes spelled names according to their sounds. You are not allowed to view links. Register or Login to view. were the unsurprising result. The variations of Meco include Menico, Menichi, Menech, Minico, Minichi, Menego, Meneghi, Menega, Menoga, Menoghi, Minigo, Menco, Menchi, Menci, Minco, Mengo, Menghi, Menga, Mingo, Minghi, Meni, Misotti, Minuziano, Mingoti, Menochio, Minelli, Minnelli, Menis, De Minico, Menichelli, Minichelli, Minichiello, Meneghelli, Meneghello, Meneghel, Menichini, Minichini, Minichino, Meneghini, Meniconi, Meneghino, Meneghin, Menicucci, Minicucci, Meneguzzi, Menoncini and many more.

Early Notables of the Meco family

Rosso Misotti, leader of the Ghibeline faction in Ferrara in 1203. Members of the Venetian Minotto family were brave soldiers: in 1265 Tommaso Minotto led the army against the Genoese forces; and in 1300 Marco Minotto was General of 37 ships which fought against the Greeks; Alessandro Minuziano was an editor and printer in Puglia in 1450 who printed the first edition of the collected works of Cicero; Giacomo Menochio was a prominent jurist in Pavia in 1550; Giovanni-Paolo Meniconi was...

Another 81 words (6 lines of text) are included under the topic Early Meco Notables in all our You are not allowed to view links. Register or Login to view. and printed products wherever possible.

Migration of the Meco family

Immigrants bearing the name Meco or a variant listed above include: Augusta Minghelli, who arrived in America on July 2, 1884, aboard the "St. Germain;" Alessandro Minghetti, who arrived in America on Nov. 8, 1883, aboard the ".

You are not allowed to view links. Register or Login to view.

|

|

|

| Intentionality of the Zodiac Construction |

|

Posted by: rikforto - 18-04-2026, 08:00 PM - Forum: Analysis of the text

- Replies (4)

|

|

Ladies, gentlemen, and unidentified beasts! For another thread, it became of interest for me to count the number of words in the Zodiac counter clockwise texts. The ALL CAPS signs are the sum of the 2 halves.

Folio Sign Word Count

TAURUS 159

AIRES 132

f72r3 Cancer 122

f70v2 Pisces 104

f72v3 Leo 85

f72r1 Taurus 81

f72r2 Gemini 79

f71v Taurus 78

f72v2 Virgo 77

f71r Aires 72

f72v1 Libra 72

f73r Scorpio 66

f73v Sagittarius 66

f70v1 Aires 60

Folio Sign Character* Count

TAURUS 947

AIRES 836

f72r3 Cancer 738

f70v2 Pisces 603

f72v3 Leo 539

f72r1 Taurus 493

f72v2 Virgo 491

f72r2 Gemini 461

f72v1 Libra 459

f71v Taurus 454

f71r Aires 442

f73r Scorpio 432

f73v Sagittarius 416

f70v1 Aires 394

*Includes the space as a character, counting the end of each of the three lines as a space.

I think the finding here is interesting if we're thinking about the intentionality of constructing the Zodiac pages. If we assume---and this may not be warranted on numerous grounds---that they were created with the text in mind, we would predict that the largest ones are the ones that got split. We see this! And we see the crowded ones that didn't get split. We also see one of the splits appearing at the bottom of the distribution, and the second half of each split appearing smaller.

Countervailing that, f72r1, f71v, and You are not allowed to view links. Register or Login to view. are all very typical, and f70v1 isn't wildly smaller than most of its peers. The size of the actual zodiacs seems to cluster around 440 +/- 55 characters, with three then being above that bound. This is closer to, but not exactly what I'd expect if the text were filling the space provided. To commit to saying the text were filling the space I'd have to say they made Cancer and Pisces more crowded, either by accident or to throw us off, but I also don't see signs they were trying to jam text in.

Given the small set and the fact neither word length nor character length are exactly standard measurements, and the fact that circles vary a bit, there's quickly a limit to what statistics can tell us, and I'm not terribly surprised to get a fuzzy result.

And so my question, numbers or features of the page, what do people see here? Did the words fill the circles until they were full or were the circles crafted as containers for predetermined words? For my part, the fact the spaces are reasonably consistent within a diagram but less so between them gives me the sense I'm looking at something planned to hold the text, but it's a subtle effect and my bias anyway.

|

|

|

Unknown alphabet - exhibition and book

Unknown alphabet - exhibition and book |

|

Posted by: sivbugge - 17-04-2026, 11:35 AM - Forum: News

- Replies (14)

|

|

From today April 17, to June 21, 2026 the Norwegian Drawing Center in Oslo, Norway, is showing my studies of the Voynich manuscript as hand-drawn copies. Most of them fragments at the scale 1:1.

The main work is a version of the large foldout diagram, folio 85v-86r, at the scale 4:1, 180x180 cm. I have measured every detail, and copied the forms as close as possible, but straightening up the lines, the geometry and colouring for a clearer reading. Also done with the equipment I find most useful for studies: Pencil, pen and colour pencils on paper.

Part of the exhibition is the independent publication Unknown Alphabet. The digital version can be viewed or downloaded for free here:

You are not allowed to view links. Register or Login to view.

This publication summarizes my work and suggests a decoding. In Parts 2 and 3 of the publication, there are interpretations of the images and the text in the large foldout diagram.

This probably touches on previous observations in this forum. I have tried to refer to them as thoroughly as possible, but unfortunately I have not been able to read all the threads in the forum’s history.

Hopefully there are some new contributions or ideas, such as:

- An analysis of the graphic and abstract aspects of the large foldout diagram.

- A decoding with the logic of a set of single letters that can be combined into ligatures. (An adjustment of my 2022 decoding.)

My interpretations align with some of the ideas discussed in this forum:

ReneZ and Antonio García Jiménez: The large foldout is about medicine. Healing medicine is connected to the six containers in the middle (You are not allowed to view links. Register or Login to view.). I see this theme of medicine by comparing the forms in the diagram with Brunschwig’s illustrations, and readings of Rupescissa and Testamentum, in addition to symbols from Medisinisch-chymisch-und alchemistisches Oraculum.

Koen G: There are vault-like shapes in three of the intermediary circles, as a metaphor for a celestial influence. I suggest a solution for why some of them are shaped exactly as they are.

Jorge Stolfi: I see what you call “extensive retouching” in the letters, but I have a different understanding of why there are several layers of strokes in many letters. To me, this is not “retouching” but a construction of ligatures, or combinations of letters—for example, where v and i are combined.

|

|

|

|

of my statistical investigations in connection with the Bavarian hypothesis. I think this might interest others too, and I’m curious to see if you can verify it, or if it’s perhaps already known (I haven’t read about it yet). I’m posting it in the Text Analysis section because that’s where it belongs.

of my statistical investigations in connection with the Bavarian hypothesis. I think this might interest others too, and I’m curious to see if you can verify it, or if it’s perhaps already known (I haven’t read about it yet). I’m posting it in the Text Analysis section because that’s where it belongs.