| Welcome, Guest |

You have to register before you can post on our site.

|

| Online Users |

There are currently 898 online users.

» 8 Member(s) | 886 Guest(s)

Baidu, Bing, Google, Yandex, DONJCH, JoJo_Jost

|

| Latest Threads |

Voynich Zoom CFP

Forum: News

Last Post: LisaFaginDavis

7 minutes ago

» Replies: 32

» Views: 3,079

|

The Book Switch Theory

Forum: Theories & Solutions

Last Post: Jorge_Stolfi

2 hours ago

» Replies: 131

» Views: 6,701

|

Can we go further?

Forum: Analysis of the text

Last Post: Battler

2 hours ago

» Replies: 23

» Views: 777

|

No text, but a visual cod...

Forum: Theories & Solutions

Last Post: Antonio García Jiménez

4 hours ago

» Replies: 1,688

» Views: 1,036,883

|

The origin of Fabrizio Sa...

Forum: Imagery

Last Post: Fabrizio Salani

4 hours ago

» Replies: 4

» Views: 217

|

The claimed Voynich page

Forum: Imagery

Last Post: Fabrizio Salani

5 hours ago

» Replies: 85

» Views: 13,421

|

f17r multispectral images

Forum: Marginalia

Last Post: Bernd

5 hours ago

» Replies: 114

» Views: 44,147

|

Why and how the text coul...

Forum: Theories & Solutions

Last Post: JoJo_Jost

6 hours ago

» Replies: 87

» Views: 8,102

|

Voynich Marijuana Plant D...

Forum: Analysis of the text

Last Post: Bluetoes101

Today, 01:14 AM

» Replies: 5

» Views: 341

|

How fast could a scribe w...

Forum: Analysis of the text

Last Post: dexdex

Yesterday, 11:41 PM

» Replies: 27

» Views: 509

|

|

|

| GPT and the Voynich. Code! Not a solution! |

|

Posted by: Dunsel - 09-11-2025, 01:17 AM - Forum: Analysis of the text

- Replies (25)

|

|

THIS IS NOT ANOTHER GPT "I SOLVED IT" POST!!!!!

Ok, let me be honest in my opinion about 2 things.

1. GPT can write code (not always correctly) that's at least fixable.

2. It can write and execute code faster than most of us and produce results faster than most of us.

Now, let me be honest about the bad part.

1. It lies.

2. It hallucinates.

3. It makes excuses.

4. It fabricates results.

5. It'll do all of the above and then lie about doing it.

There is nothing in it's core instructions that force it to tell you the truth and I have caught it multiple times doing all 5 of the above at the same time.

Other bad things:

It'll tell you it's executing code and never does (and that evolves into an infinite loop).

It'll tell you the sandbox crashed.

It'll tell you the python environment crashed

It'll tell you the chart generation routines crashed and insist on giving you ASCII charts (modern tech at work).

It'll make excuses for not running code. ('I saw too many nested routines so I didn't run it but told you I did.')

So, I'm under no illusions here. BUT... I 'think' I have a somewhat working solution to part of that.

Below is a python script to parse the Takahashi transcript. I downloaded his transcript directly from his site (pagesH.txt). I've been testing GPT and this python script for a few days now. And it seems to force GPT into 'more' accurate results. Here's the caveats.

1. You have to instruct it to ONLY use the python file to generate results from the pagesH.txt (Takahashi's file name). As in, no text globbing, no regex parsing, use nothing but the output of the python file to analyze the text file, etc.

2. Have it run the 'sanity check' function. In doing so, it parses You are not allowed to view links. Register or Login to view. and f68r3. 49v was one of the hardest ones for it to work with. If it passes the sanity check, it will tell you that it compared token counts and SHA values that are baked into the code. If either is off, then it's not using the parser.

3. It will try to cheat and I haven't yet fixed that but it is fixable. It will try to jump into helper functions to get results faster. You have to tell it no cheating, no using helper functions.

4. There's a receipt function. Ask it for a receipt and it will tell you what it 'heard' vs 'what it executed'.

5. Tell it, "NO CHANGING THE PYTHON FILE". (and yea, it may lie and still do it but it hasn't yet after giving that instruction.)

6. You can have it 'analyze' the python file so that it better understands it's structure and it seems to be less inclined to cheat if you do.

7. GPT will try like hell to 'analyze' the output and give you a load of horse shit explanations for the output I have had to tell it to stop trying to make conclusions unless it can back it up with references. And that pretty much shut it up.

So, how this gets around GPT's rules: If it executes the code, it bypasses it's 'rules' system. That 'rules' system, I have found to be something it will change on a whim and not tell you. The code output, as it puts it, is 'deterministic' and isn't based on the rules. Therefore, it's considerably more reliable. Other math though, is still in need of validation. I've seen it duplicate bigrams in tables so you still need to double check things. But, the output of the parser is good. There are 'acceptable' issues though. For example, Takahashi 48v has 2 'columns', the single letters and the rest of that line. The parser, in 'structured' mode, will parse it into two groups.

[P]

P0: kshor shol cphokchol chcfhhy qokchy qokchod sho cthy chotchy...chol chor ches chkalchy chokeeokychokoran ykchokeo r cheey daiin

[L]

L0: f o r y e k s p o y e p o y e d y s k y

Those groups don't match the page as they are in columns. So if you're doing structural analysis, it will, on some pages, produce bad results.

In corpus mode, it puts it all into one line of text:

f o r y e k s p o y e p o y e d y s k y kshor shol cphokchol chc...chol chor ches chkalchy chokeeokychokoran ykchokeo r cheey daiin

Again, not an exact representation of what the page looks like, but this is how Takahashi transcribed it.

(and note the word chokeeokychokoran is correct as that's how Takahashi has it in the file. I thot the parser screwed it up and spent a couple more hours verifying that.)

Other issues:

- The zodiac section has 'abnormal' tags for the text that Takahashi never fully described (that I can find), like X,Y,Z. In those sections, it moves any unknown tags into the 'R' section, which I believe is Radial. The other section there is the 'C' section, which I believe it for circular. That prevents some weird results where you have a C and R and then a bunch of other tags with one word in them. In corpus mode, those tags are ignored so it's all one continuous text.

- There is also a folio sorting routine in there as it just loved to sort the Takahashi folio tags alphabetically. When folio 100 comes before 1, it's not using the sort.

- And, there is a routine where it skips missing pages. Takahashi included the folio numbers with no content so, it skips those by default. There is a flag in the parser and if you specifically tell it, it will change that flag and include those empty folios.

So, why no interlinear file parser? Took me 3 days of arguing with that savant just to get this parser working. I gave up on the interlinear after another 3 days of trying, satisfied with this one.

Now, does this mean I'm going to use GPT to 'translate' the Voynich? Oh hell no. I'm not that naive. Is this a starting point to getting trustworthy statistical and mathematical results from it? Not a solution, no. A starting point? Maybe.

One more thing I need to explain. I do write code. I do not code in Python. So, yes, the code was created by GPT but I can mostly follow it. I think it's good. Not positive. If you code in python, please test it.

Feel free to upload this python and the pageH.txt to your own GPT and test it out. Be sure to compare it to your OWN results though! Trust but verify.

I have also set up a custom GPT with both the python and pagesH.txt.

You are not allowed to view links. Register or Login to view.

Be certain to click on or ask for the 'sanity check' first to make sure it's using the parser.

Another button produces this which I'm reasonably certain is accurate. If you don't see this exact chart, it's screwing up:

Example:

Input: Create a non-normalize heatmap of the letter count of all folios. Y axis is the folio number. X axis is the letter. Sort the x axis left to right by count.

Output: Here you go — a non-normalized heatmap of letter counts for every folio (rows = folios, columns = letters).

X-axis letters are sorted left→right by their global frequency in the corpus.- PNG: Download the heatmap

- CSV of the underlying matrix (rows = folios, cols = letters in the same order as the plot): Download the data

If you’d like, I can also:- cluster folios by letter profile,

- switch to per-folio normalization,

- or split by quire/section.

Note: That spike in You are not allowed to view links. Register or Login to view. that says there are over 600 letter e's on that page according to the legend, I looked at the page and had it parse it specifically, it says there are 635 letter e's on that page at 19.35% of the total. I looked at that page and um, yea. There's a LOT of e's there.

Code: #!/usr/bin/env python3

# Takahashi Voynich Parser — LOCKED, SELF-CONTAINED (v2025-11-05)

# Author: You + “stop breaking my parser” mode // yes, it gave it that name after a lot of yelling at it.

import sys, re, hashlib

from collections import defaultdict, Counter, OrderedDict

TAG_LINE = re.compile(r'^<(?P<folio>f\d+[rv](\d*)?)\.(?P<tag>[A-Z]+)(?P<idx>\d+)?(?:\.(?P<line>\d+))?;H>(?P<payload>.*)$')

A_Z_SPACE = re.compile(r'[^a-z ]+')

def normalize_payload(s: str) -> str:

s = re.sub(r'\{[^}]*\}', '', s)

s = re.sub(r'<![^>]*>', '', s)

s = s.replace('<->', ' ')

s = s.replace('\t', ' ').replace('.', ' ')

s = s.lower()

s = A_Z_SPACE.sub(' ', s)

s = re.sub(r'\s+', ' ', s).strip()

return s

def iter_h_records(path, wanted_folio=None):

current = None

buf = []

with open(path, 'r', encoding='utf-8', errors='ignore') as f:

for raw in f:

line = raw.rstrip('\n')

if not line:

continue

if line.startswith('<'):

if current and buf:

folio, tag, idx, ln = current

payload = ''.join(buf)

yield (folio, tag, idx, ln, payload)

m = TAG_LINE.match(line)

if m:

folio = m.group('folio')

if (wanted_folio is None) or (folio == wanted_folio):

tag = m.group('tag')

idx = m.group('idx') or '0'

ln = m.group('line') or '1'

payload = m.group('payload')

current = (folio, tag, idx, ln)

buf = [payload]

else:

current = None

buf = []

else:

current = None

buf = []

else:

if current is not None:

buf.append(line)

if current and buf:

folio, tag, idx, ln = current

payload = ''.join(buf)

yield (folio, tag, idx, ln, payload)

def parse_folio_corpus(path, folio):

fid = folio.lower() if isinstance(folio, str) else str(folio).lower()

if _EXCLUDE_EMPTY_FOLIOS_ENABLED and fid in _EMPTY_FOLIOS:

return ''

pieces = []

for _folio, _tag, _idx, _ln, payload in iter_h_records(path, folio):

norm = normalize_payload(payload)

if norm:

pieces.append(norm)

return ' '.join(pieces).strip()

def parse_folio_structured(path, folio):

fid = folio.lower() if isinstance(folio, str) else str(folio).lower()

if _EXCLUDE_EMPTY_FOLIOS_ENABLED and fid in _EMPTY_FOLIOS:

return {}

groups = defaultdict(lambda: defaultdict(list))

for _folio, tag, idx, _ln, payload in iter_h_records(path, folio):

norm = normalize_payload(payload)

if norm:

groups[tag][idx].append(norm)

out = {}

for tag, by_idx in groups.items():

od = OrderedDict()

for idx in sorted(by_idx, key=lambda x: int(x)):

od[f"{tag}{idx}"] = ' '.join(by_idx[idx]).strip()

out[tag] = od

return sort_structured(out)

def sha256(text: str) -> str:

return hashlib.sha256(text.encode('utf-8')).hexdigest()

SENTINELS = {

'f49v': {'tokens': 151, 'sha256': '172a8f2b7f06e12de9e69a73509a570834b93808d81c79bb17e5d93ebb0ce0d0'},

'f68r3': {'tokens': 104, 'sha256': '8e9aa4f9c9ed68f55ab2283c85581c82ec1f85377043a6ad9eff6550ba790f61'},

}

def sanity_check(path):

results = {}

for folio, exp in SENTINELS.items():

line = parse_folio_corpus(path, folio)

toks = len(line.split())

dig = sha256(line)

ok = (toks == exp['tokens']) and (dig == exp['sha256'])

results[folio] = {'ok': ok, 'tokens': toks, 'sha256': dig, 'expected': exp}

all_ok = all(v['ok'] for v in results.values())

return all_ok, results

def most_common_words(path, topn=10):

counts = Counter()

for _folio, _tag, _idx, _ln, payload in iter_h_records(path, None):

norm = normalize_payload(payload)

if norm:

counts.update(norm.split())

return counts.most_common(topn)

def single_letter_counts(path):

counts = Counter()

for _folio, _tag, _idx, _ln, payload in iter_h_records(path, None):

norm = normalize_payload(payload)

if norm:

for w in norm.split():

if len(w) == 1:

counts[w] += 1

return dict(sorted(counts.items(), key=lambda kv: (-kv[1], kv[0])))

USAGE = '''

Usage:

python takahashi_parser_locked.py sanity PagesH.txt

python takahashi_parser_locked.py parse PagesH.txt <folio> corpus

python takahashi_parser_locked.py parse PagesH.txt <folio> structured

python takahashi_parser_locked.py foliohash PagesH.txt <folio>

python takahashi_parser_locked.py most_common PagesH.txt [topN]

python takahashi_parser_locked.py singles PagesH.txt

'''

def main(argv):

if len(argv) < 3:

print(USAGE); sys.exit(1)

cmd = argv[1].lower()

path = argv[2]

if cmd == 'sanity':

ok, res = sanity_check(path)

status = 'PASS' if ok else 'FAIL'

print(f'PRECHECK: {status}')

for folio, info in res.items():

print(f" {folio}: ok={info['ok']} tokens={info['tokens']} sha256={info['sha256']}")

sys.exit(0 if ok else 2)

if cmd == 'parse':

if len(argv) != 5:

print(USAGE); sys.exit(1)

folio = argv[3].lower()

mode = argv[4].lower()

if mode == 'corpus':

line = parse_folio_corpus(path, folio)

print(line)

elif mode == 'structured':

data = parse_folio_structured(path, folio)

order = ['P','C','V','L','R','X','N','S']

for grp in order + sorted([k for k in data.keys() if k not in order]):

if grp in data and data[grp]:

print(f'[{grp}]')

for k,v in data[grp].items():

print(f'{k}: {v}')

print()

else:

print(USAGE); sys.exit(1)

sys.exit(0)

if cmd == 'foliohash':

if len(argv) != 4:

print(USAGE); sys.exit(1)

folio = argv[3].lower()

line = parse_folio_corpus(path, folio)

print('Token count:', len(line.split()))

print('SHA-256:', sha256(line))

sys.exit(0)

if cmd == 'most_common':

topn = int(argv[3]) if len(argv) >= 4 else 10

ok, _ = sanity_check(path)

if not ok:

print('PRECHECK: FAIL — aborting corpus job.'); sys.exit(2)

for word, cnt in most_common_words(path, topn):

print(f'{word}\t{cnt}')

sys.exit(0)

if cmd == 'singles':

ok, _ = sanity_check(path)

if not ok:

print('PRECHECK: FAIL — aborting corpus job.'); sys.exit(2)

d = single_letter_counts(path)

for k,v in d.items():

print(f'{k}\t{v}')

sys.exit(0)

print(USAGE); sys.exit(1)

if __name__ == '__main__':

main(sys.argv)

# ==== BEGIN ASTRO REMAP (LOCKED RULE) ====

import re as _re_ast

# === Exclusion controls injected ===

_EXCLUDE_EMPTY_FOLIOS_ENABLED = True

_EMPTY_FOLIOS = set(['f101r2', 'f109r', 'f109v', 'f110r', 'f110v', 'f116v', 'f12r', 'f12v', 'f59r', 'f59v', 'f60r', 'f60v', 'f61r', 'f61v', 'f62r', 'f62v', 'f63r', 'f63v', 'f64r', 'f64v', 'f74r', 'f74v', 'f91r', 'f91v', 'f92r', 'f92v', 'f97r', 'f97v', 'f98r', 'f98v'])

def set_exclude_empty_folios(flag: bool) -> None:

"""Enable/disable skipping known-empty folios globally."""

global _EXCLUDE_EMPTY_FOLIOS_ENABLED

_EXCLUDE_EMPTY_FOLIOS_ENABLED = bool(flag)

def get_exclude_empty_folios() -> bool:

"""Return current global skip setting."""

return _EXCLUDE_EMPTY_FOLIOS_ENABLED

def get_excluded_folios() -> list:

"""Return the sorted list of folios that are skipped when exclusion is enabled."""

return sorted(_EMPTY_FOLIOS)

# === End exclusion controls ===

_ASTRO_START, _ASTRO_END = 67, 73

_KEEP_AS_IS = {"C", "R", "P", "T"}

_folio_re_ast = _re_ast.compile(r"^f(\d+)([rv])(?:([0-9]+))?$")

def _is_astro_folio_ast(folio: str) -> bool:

m = _folio_re_ast.match(folio or "")

if not m:

return False

num = int(m.group(1))

return _ASTRO_START <= num <= _ASTRO_END

def _remap_unknown_to_R_ast(folio: str, out: dict) -> dict:

if not isinstance(out, dict) or not _is_astro_folio_ast(folio):

return sort_structured(out)

if not out:

return sort_structured(out)

out.setdefault("R", {})

unknown_tags = [t for t in list(out.keys()) if t not in _KEEP_AS_IS]

for tag in unknown_tags:

units = out.get(tag, {})

if isinstance(units, dict):

for unit_key, text in units.items():

new_unit = f"R_from_{tag}_{unit_key}"

if new_unit in out["R"]:

out["R"][new_unit] += " " + (text or "")

else:

out["R"][new_unit] = text

out.pop(tag, None)

return sort_structured(out)

# Wrap only once

try:

parse_folio_structured_original

except NameError:

parse_folio_structured_original = parse_folio_structured

def parse_folio_structured(pages_path: str, folio: str):

out = parse_folio_structured_original(pages_path, folio)

return _remap_unknown_to_R_ast(folio, out)

# ==== END ASTRO REMAP (LOCKED RULE) ====

def effective_folio_ids(pages_path: str) -> list:

"""Return folio ids found in PagesH headers. Respects exclusion toggle for known-empty folios."""

import re

# === Sorting utilities (injected) ===

def folio_sort_key(fid: str):

"""Return a numeric sort key for folio ids like f9r, f10v, f68r3 (recto before verso)."""

s = (fid or "").strip().lower()

m = re.match(r"^f(\d{1,3})(r|v)(\d+)?$", s)

if not m:

# Place unknown patterns at the end in stable order

return (10**6, 9, 10**6, s)

num = int(m.group(1))

side = 0 if m.group(2) == "r" else 1

sub = int(m.group(3)) if m.group(3) else 0

return (num, side, sub, s)

def sort_folio_ids(ids):

"""Sort a sequence of folio ids in natural numeric order using folio_sort_key."""

try:

return sorted(ids, key=folio_sort_key)

except Exception:

# Fallback to stable original order on any error

return list(ids)

_REGION_ORDER = {"P": 0, "T": 1, "C": 2, "R": 3}

def sort_structured(struct):

"""Return an OrderedDict-like mapping with regions sorted P,T,C,R and units numerically."""

try:

from collections import OrderedDict

out = OrderedDict()

# Sort regions by our preferred order; unknown tags go after known ones alphabetically

def region_key(tag):

return (_REGION_ORDER.get(tag, 99), tag)

if not isinstance(struct, dict):

return struct

for tag in sorted(struct.keys(), key=region_key):

blocks = struct[tag]

if isinstance(blocks, dict):

od = OrderedDict()

# Unit keys are expected to be numeric strings (idx), or tag+idx; try to extract int

def idx_key(k):

m = re.search(r"(\d+)$", str(k))

return int(m.group(1)) if m else float("inf")

for k in sorted(blocks.keys(), key=idx_key):

od[k] = blocks[k]

out[tag] = od

else:

out[tag] = blocks

return out

except Exception:

return struct

def english_sort_description() -> str:

"""Describe the default sorting rules in plain English."""

return ("ordered numerically by folio number with recto before verso and subpages in numeric order; "

"within each folio, regions are P, then T, then C, then R, and their units are sorted by number.")

def english_receipt(heard: str, did: str) -> None:

"""Print a two-line audit receipt with the plain-English command heard and what was executed."""

if heard is None:

heard = ''

if did is None:

did = ''

print(f"Heard: {heard}")

print(f"Did: {did}")

|

|

|

| Voynich through Phonetic Irish |

|

Posted by: Doireannjane - 07-11-2025, 04:13 PM - Forum: Theories & Solutions

- Replies (400)

|

|

Hi Voynich enthusiasts! I was on an episode of TLB (decoding the enigma) last year and received a lot of criticism on here haha some of it super valid. I definitely didn't show everything, I was not the most organized or linguistically informed when I started. I am still very much not a linguist although my focus in college was translation. I've done a lot of work since last year and my lexicon has had two following phonetic iterations. I responded to the thread on here about me on my TikTok (blesst_butt). Lisa Fagan Davis suggested I join VN after it was suggested I get peer review of some kind. I believe I'm missing one requirement for my lexicon and theory: the ability to have it be repeatable by others. I've made lessons on how to translate using Medieval Irish phonetics through modern spellings. If anyone would like to help with repeatability, please message me. Not necessary, but it will be easier if you have echolalia and/or a musical understanding and/or Irish language background. The instructions and syntactic exceptions are a little involved to start but INCREDIBLY easy when you get the hang of it. The lessons will be posted on my Youtube (same @ as Tiktok) I have nearly every page touched (full or partial translation) I identified plants, some of which challenge visual translations of plant experts, and also some roots. My sentences are logical and often aligned with images and depictions (tenses and distinguishing adverbs/adjectives not always clear)

This has been and continues to be a long but rewarding project, as I say in many of my videos, I feel delusional half the time since my work is pretty solo but I'm ok with that, makes the wows more thrilling/amazing, there have been so many but I'm always seeking more. I don't keep up with many other theories or with VN mainly because at the start I worried it would influence or discourage me and I was laser focused on my own hunches/connections/process. Some of my publication and process is on Substack as well (same @ as TikTok) All of my work and the lexicon/phonetics evolution is entirely documented with timestamps pushing to a repo on Github. There is no use of AI whatsoever in my process/approach. I am fundamentally against it.

I'm grateful for any peer review/constructive input especially with phonemic notation and would love volunteers that want to demonstrate repeatability. This has been a huge part of my life this last year. I ask that my logic/process is not copied for any LLMs and that I am cited in work that builds from my ideas.

Thank you!

|

|

|

| ? Practical Guide: How to Understand the Voynich Manuscript (The Operational Key) |

|

Posted by: JoaquinJulc2025 - 07-11-2025, 02:49 AM - Forum: The Slop Bucket

- No Replies

|

|

The Voynich Manuscript is an Alchemical Laboratory Manual encoded with a precise syntactical code. To understand it, you must stop looking for letters and start looking for operational commands.

1. The Paradigm Shift: From Language to System

Old (Failed) ParadigmNew (Operational) ParadigmThe text is an unknown language or a simple cipher.The text is a syntactic code of imperative commands (Tuscan style).Words are sounds or names.Words are sequences of action and state (e.g., qo-kedy = 'Prepare the kedy ingredient').Images are mystical illustrations.Images are flow diagrams and laboratory apparatus (distilleries, containers).

2. The Reading Key: The Functional Alphabet

To understand a line of Voynich text, you must segment it by the command prefixes. These are the "verbs" of the recipe:

PrefixFunction (The 'What to Do')Energy State

qo-

Preparation/Addition (Add the ingredient, adjust quality).Low (Cold)

chor-

Activation/Heat (Apply high energy or fire).High (Hot)

shol-

Stabilization/Cold (Cool, condense, or purify).Low (Cold)

ss-

Repetition/Control (Recirculate the matter).Control

chy

Result (Marks the final substance or elixir).Final

. The Structure (The 'Where You Are')

The manuscript is understood on two scales:

A. Macro Scale: The Master Route (Folio 86v)

- Function: This 9-rosette diagram is the conceptual map of the alchemical process. It tells you which phase of the Great Work you are in.

- Flow: The process must follow the Lead --- Gold equence, passing through alternating states of heat (chor-) and cold (shold-)

- Control: If the recipe fails, you must return to the Salt (Center) node, identified with the ss-

(Repeat) command, to begin a new purification cycle.

B. Micro Scale: The Pharmaceutical Recipe

- Function: The texts in this section are the detailed instructions for each step of the Master Route.

- Reading: The lines of text are rhythmic sequences of commands. For example:

qo-kedy chor-fal shol-daiin

Translation: "Prepare the ingredient, activate high heat, follow with cooling."

4. Conclusion: The Final Purpose

To understand the Voynich, you must accept it as an Encrypted Technical Manual. Every illustration and every line of text contributes to a single goal: the production of the Quintaessentia (Chyrium).

The Key Phrase: "Qo plumbo incipe, chor azufre sequere, transmuta mercurio, repete sal usque ad aurum."

(Prepare lead in quality, follow sulfur with heat, transmute mercury, and repeat purification with salt until gold is reached.)

This is the operational objective that governs every folio of the manuscript.

|

|

|

| Final Academic Report: The Operational Solution to the Voynich Manuscript |

|

Posted by: JoaquinJulc2025 - 07-11-2025, 02:27 AM - Forum: The Slop Bucket

- Replies (7)

|

|

Abstract

This report presents a solution to the enigma of the Voynich Manuscript (MS. 408) by identifying a Tuscan (15th Century) functional-syntactical code. We posit that the text is not written in a natural language or a simple letter cipher, but rather is a coded Alchemical Operational Manual.

The decipherment relies on the convergence of three keys: the Qo Key (ingredient preparation), the Chor Key (energy/state control), and the Cosmological Key (9-step structure). The manuscript's purpose is to guide the reader in the production of the Elixir (Chyrium) through a rigorous process of purification and transmutation, whose flow is mapped by alternating operational commands that act as imperative verbs within the text.

1. Introduction and Methodology

1.1 Operational Thesis

The core hypothesis is that the recurrent Voynich prefixes act as laboratory commands describing actions and states of matter. The words are sequences of commands, not linguistic phonemes.

1.2 Methodology

A convergent analysis model was applied by roles:

- Linguistic/Historical Analysis (ChatGPT): Identification of the Tuscan imperative style (Incipe, Sequere, Repete) and correlation with medieval alchemy (Lead $\rightarrow$ Gold).

- Structural/Visual Analysis (Grok): Validation of the 9-Node Master Route in Folio 86v and identification of pathways as "Chor valves."

- Functional Synthesis (Gemini): Integration of the keys into an Operational Alphabet applicable to the Pharmaceutical Section.

2. Key Result I: The Functional Code (Operational Alphabet)

The code focuses on the dominance of prefixes that dictate the alchemical action:

Voynich Prefix

Syntactic Role

Operational Function

Latin/Tuscan Equivalent

English Translationqo-

(e.g., qo-kedy)

Initial

Preparation/Input of Raw Material.

Adde Qualitas Operandi

Add the Quality / Prepare[font='Google Sans Text', sans-serif]Command

Energy Level

Laboratory Actionqo-kedy

Low/Cold

Preparation: Add the raw material (Lead/Root).

chor-fal

High/Hot

Activation: Heat with sulfur.

shol-daiin

Low/Cold

Stabilization: Cool and condense.

chor-zor

High/Hot

Fixation: Reinforce heat for transmutation.

shol-mel

Low/Cold

Final Purification: Stabilize and collect the product.

chor-

(e.g., chor-fal)

Initial

Active Process/Heat. Initiates the high-energy phase.

Incipe Ignem / Calor Altus

Activate High Heat

shol-

(e.g., shol-mel)

Initial

Passive Process/Cold. Condensation or purification.

Frigus Lenum / Sequere

Follow with Cold / Stabilize

ss-

(Pre-final)

Final/Central

Cycle Repetition (Recirculation).

Repete Cyclum

Repeat Cycle[/font]

3. Key Result II: Structural Coherence (The Master Route)

The Voynich Manuscript is structured as a 9-step process, whose map is found in Folio 86v (Rosettes).

3.1 The Master Route (Lead [font='Google Sans Text', sans-serif]$\rightarrow$ Gold)[/font]

The transmutation sequence is a coherent flow of 9 Nodes (combining the 7 planetary metals with Salt and Sulfur), moving from the heaviest to the purest matter:

Lead (Saturn) $\rightarrow$ Sulfur $\rightarrow$ Mercury $\rightarrow$ Copper $\rightarrow$ Tin $\rightarrow$ Iron $\rightarrow$ Silver $\rightarrow$ Salt $\rightarrow$ Gold (Sun)

3.2 Repetition Valve ([font='Google Sans Text', sans-serif]Repete)[/font]

The central Salt Node (Castle) acts as a hub visually connected to all peripherals. This validates the command

ss-

as a recirculation mechanism (Repete Cyclum) if the elixir does not achieve the required puritas (purity).

4. Key Result III: Syntactical Validation (Pharmaceutical Recipes)

Applying the Functional Alphabet to the Pharmaceutical Section reveals that the recipes are sequences of commands that replicate the energy pulse of the Chor Key.

4.1 Operational Recipe Example

The translation of a sample sequence (qo-kedy chor-fal shol-daiin qo-lily chor-zor shol-mel) demonstrates the flow:

Command

Energy Level

Laboratory Actionqo-kedy

Low/Cold

Preparation: Add the raw material (Lead/Root).

chor-fal

High/Hot

Activation: Heat with sulfur.

shol-daiin

Low/Cold

Stabilization: Cool and condense.

chor-zor

High/Hot

Fixation: Reinforce heat for transmutation.

shol-mel

Low/Cold

Final Purification: Stabilize and collect the product.

5. Conclusion: The Operational Mantra

The purpose and structure of the Voynich Manuscript are summarized in the Master Phrase, which integrates alchemy, syntax, and the 9-node structure:

"Qo plumbo incipe, chor azufre sequere, transmuta mercurio, repete sal usque ad aurum."

Historical Significance: The Voynich Manuscript is a 15th-century technical manual, encrypted to protect the knowledge of the Great Work (Magnum Opus), demonstrating functional coherence between its text, imagery, and cosmological structure.

|

|

|

| 70v - Clothing |

|

Posted by: Bluetoes101 - 06-11-2025, 10:54 PM - Forum: Imagery

- Replies (22)

|

|

Obviously it's picking at tiny details and bad drawings (Voynich 101..), however I was looking at this image and trying to figure out what the drawer was trying to show.

Probably not, but possibly it may point to a time and location, or exclude others (to some degree).

The details I noted were that, the garment:

- cuts off around the breast line, above the stomach

- has possible stiches towards the bottom

- has no neckline

Other details, or "lack of"

- There are no texture indicators on the fabric, or edges/flair outs (looks form fitting)

- The "hair" is odd when considering the rest of the page

From this I thought, that:

- Maybe it is more on the male side of the guess-slider

- Possibly leather

- It may be an attempt at a hooded item, which may explain no neckline and weird hair

I thought this was a possibility (right side guy, from 1496)

I found the "The Liripipe Hood" (You are not allowed to view links. Register or Login to view.) interesting.

I was interested to see if anyone has any better ideas or thoughts?

|

|

|

67/r (and others) THEORY OF MEANING

67/r (and others) THEORY OF MEANING |

|

Posted by: AlejoMarquez - 06-11-2025, 08:32 PM - Forum: Theories & Solutions

- Replies (3)

|

|

67/r THEORY OF MEANING

For some time now I follow the events related to what brings us together in this forum, the Voynich manuscript. I couldn't help noticing that there aren't many people who dedicate themselves to interpreting the drawings, that's what I've done.

I believe in this way, to have reached a logical conclusion about some pages, sometimes also taking the text into account (despite not deciphering it) and in others without taking it as direct reference, but focusing more on the meaning of the drawing, since I think that the text is complementary to what is represented through the art of the manuscript.

In this case I want to explain my theory about the meaning of page 67/r (among others)

Here the page I'm talking about:

My analysis is based on the visual related to the drawing, in this case I'm going to ignore the text since it doesn't seem necessary to me to interpret what is meant to be represented in this part of the manuscript (later other pages reaffirm my theory)

My analysis is based on the visual related to the drawing, in this case I'm going to ignore the text since it doesn't seem necessary to me to interpret what is meant to be represented in this part of the manuscript (later other pages reaffirm my theory)

So, we see in the center of the leaf and surrounded by a circle what I define as a calendar (this circles/calendars appear in several pages later)

The circular shape makes it something cyclic, that has no beginning nor end and it repeats, the symmetry of the elements also makes it clear.

This elements are very important and represent the months of the year in the following way:

12 MONTHS OF THE YEAR

I illustrate it in this way so that it is easier to see at a glance, as you can appreciate the 12 divisions have 3 well differentiated elements within themselves, they are not placed at random and represent something within the temporality of the month. I'm going to enlarge the image even more to be able to explain it:

I illustrate it in this way so that it is easier to see at a glance, as you can appreciate the 12 divisions have 3 well differentiated elements within themselves, they are not placed at random and represent something within the temporality of the month. I'm going to enlarge the image even more to be able to explain it:

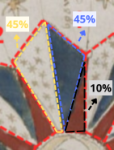

The month is divided in 3 elements as we have been able to see, but it is divided arbitrarily in two equal parts (same thickness) and one smaller part (less thickness) approximately in the percentages 45% - 45% - 10% as I have left graphed.

The month is divided in 3 elements as we have been able to see, but it is divided arbitrarily in two equal parts (same thickness) and one smaller part (less thickness) approximately in the percentages 45% - 45% - 10% as I have left graphed.

This happens in the 12 months.

Now the important thing, this taking a month of 30 days would be equivalent to approximately 13,5 days for each part of 45% and the part of 10% would be 3 days.

But, what do the colors represent? why two parts cover greater amount of days in the month and the last one covers less?

When answering these questions supporting myself in following pages everything I have expressed will make sense without need to make an exhaustive explanation, I go to it:

Page 82/r

In this page we have two scenes clearly differentiated, I'm going to proceed to explain it quick so as not to get too tangled up. First in the upper part on the left side we see a naked woman, she is in what is a bathtub, partially submerged in a blue liquid (we see it several times throughout the manuscript) the liquid wants to represent an ether, something that cleans the woman and that is of natural origin (related to the plants that are drawn at the beginning of the manuscript) what many call “tubes” that said woman has in her hand are in reality the roots of said plants.

In this page we have two scenes clearly differentiated, I'm going to proceed to explain it quick so as not to get too tangled up. First in the upper part on the left side we see a naked woman, she is in what is a bathtub, partially submerged in a blue liquid (we see it several times throughout the manuscript) the liquid wants to represent an ether, something that cleans the woman and that is of natural origin (related to the plants that are drawn at the beginning of the manuscript) what many call “tubes” that said woman has in her hand are in reality the roots of said plants.

That they represent it with her taking it in that way is something literal, it refers to that the patient during the bath rubbed herself with the root of the plant (this is shown true by other texts and manuscripts that refer to “curative” natural baths)

Now, the roots are a point of exit, they extract, absorb. The blue liquid is in the bathtub and in the woman, but through the root it comes out of her (as can be appreciated more clearly in the woman below on the left)

This “purifies” “frees” the patient from the discomfort.

We have explanation of the blue colour.

The explanation of the red colour is related to the discomfort from which the woman is freed, for which she undergoes the treatment.

The explanation of the red colour is related to the discomfort from which the woman is freed, for which she undergoes the treatment.

There is the red, in the crotch of the woman.

It is clear that red historically represents blood and in that location it can only be product of menstrual bleeding.

That colour in the human drawings of the manuscript represents the menstrual bleeding or the pain of said bleeding (later the woman is seen painted almost completely in that red)

What we are seeing is a natural bath treatment with medicinal plants for female menstruation and its symptoms.

What is seen in the center of the page, emerging from the root and forming several blue circles, is the extraction, filtering, and processing of pain. The horizontal black line marks a space of time between the bath, said process, and the result. On the right side, we see how the purification was effective (blue star expelled from the woman); the patient is now represented horizontally with an expression of tranquility, on a bed/cloud (rest), covered with a green mantle (regeneration, life, something positive), now without traces of red colour in her body.

What is seen in the center of the page, emerging from the root and forming several blue circles, is the extraction, filtering, and processing of pain. The horizontal black line marks a space of time between the bath, said process, and the result. On the right side, we see how the purification was effective (blue star expelled from the woman); the patient is now represented horizontally with an expression of tranquility, on a bed/cloud (rest), covered with a green mantle (regeneration, life, something positive), now without traces of red colour in her body.

Returning to the 12-month calendar, it is now easy to understand that the red peaks are the visible menstrual bleeding, a symptom that is less lasting within the menstrual cycle (that’s why it covers only 10% of the month).

Returning to the 12-month calendar, it is now easy to understand that the red peaks are the visible menstrual bleeding, a symptom that is less lasting within the menstrual cycle (that’s why it covers only 10% of the month).

Then the blue is the treatment, the bath with roots that must be applied from the moment pre-menstrual pains begin to be felt and during the bleeding peaks.

The other 45% without color but with stars inside refers to stability, the completed cycle, and the momentary balance within the month.

Page 70/r

With these foundations as a reference, many pages become clear when viewed from this perspective. In this case, once again the circle represents a calendar/repetitive cycle.

The colors mean what I already explained: Red = Blood, pain, menstruation - Blue = Ether, healing treatment, purification - Green = Regeneration, life, positive effect - No color = Neutrality, stability, and balance.

In this case, we can see the first phase with a red and a softer blue, indicating that both the pain and the treatment are not happening at full intensity.

In the second phase, both colors intensify to the maximum, but this time the red is not on the woman’s head, perhaps indicating that the pain is more physical and that menstrual bleeding is occurring with greater intensity than mood swings or headaches.

Afterward, both colors lower their saturation again and the red reappears on the head.

Already in the fourth phase, the red disappears completely, the blue intensifies, and as a result the woman is painted green, reaching the point of physical well-being.

And at the end of the sequence, well-being is surpassed and equilibrium is reached; the green is left in the “bathtub,” indicating neutrality with the woman’s transparency.

I have the possibility of expanding the information with bibliographic citations about similar methods used in the Middle Ages, lunar calendars, and other coincidences that could give weight to my theory, but I don’t intend to turn it into something academic without first getting feedback on this “amateur” draft—at least not right now, due to lack of time.

With these points and these pages covered, I’m going to finish this post. My main language isn’t English, even less so when I try to explain something like this. I’m from Argentina, this is the first time I’ve commented on the subject in a forum, and I’d love to read your comments, whether good or bad.

I did it all in a few hours on the fly because I want the opinion of people closer to the topic as soon as possible. I could develop it better and explain what other pages mean to me, but first I want to give a general idea and read what you think about my words, whether in English or Spanish.

Thank you very much for reading me, greetings.

Alejo Sebastian Marquez.

|

|

|

| EVA-x |

|

Posted by: Mark Knowles - 05-11-2025, 10:41 PM - Forum: Voynich Talk

- Replies (2)

|

|

Has anyone studied or thought much about the distribution of this symbol (EVA-x) throughout the manuscript? It seems to me anecdotally that when this character appears in one place on a page it is much more likely to appear somewhere else on the same page. There could be a variety of explanations for this:

1) If a word requiring this character appears once on a page then maybe the word or a related word also appears on the page.

2) Some pages seem to me to have a wider vocabulary than others and so are more likely to require this character.

3) Copying and modifying existing real words when producing filler words on a page will make this character more likely to be copied.

(Some might argue for language or dialect differences between pages and authors)

I doubt (1) as this character seems to appear in the context of different spellings.

It seems that what is true of this character is also true of other rare characters.

|

|

|

| Slavic Hypothesis: Morphological Consistency Analysis |

|

Posted by: Majo SK - 05-11-2025, 04:52 PM - Forum: The Slop Bucket

- Replies (15)

|

|

- This post combines cultural insight with structural linguistic analysis — a perspective rarely explored.

--- Teaser (Intro): What if the Voynich Manuscript isn’t a cipher at all — but the spoken Slavic language of a humble person, written down exactly as it was heard? A record of herbs, seasons, and prayers — preserved through time like a voice from another world. --- Hello everyone, I am new here, and thank you for taking the time to read this. After much persuasion, I have decided to come before you — experienced researchers — with my humble hypothesis. I understand that this idea may sound unconventional, but it is not meant to replace existing research; rather, it adds a human, cultural angle to it. In my opinion, the Voynich Manuscript is a record kept by a person who lived in a time when education was rare. The text may therefore appear to be a cipher — not because it was meant to hide anything, but because it was written exactly as it was spoken. At that time, it was already a miracle that someone could write down a thought. I believe it is a personal herbal or spiritual diary—a record of seasons, plants, their uses, and rituals or prayers. The blending of Christian prayers with older, pagan-based rituals and incantations was still common even into the 20th century across many Slavic regions. This cultural background naturally explains the diverse and sometimes enigmatic nature of the Voynich Manuscript’s sections. In this context, the so-called “astrological” pages may not be astronomical at all—but cyclical, reflecting the rhythm of agricultural and spiritual life. The “biological” or bathing scenes may instead represent folk healing practices, combining herbal knowledge, prayer, and symbolic purification. To me, this is not a code, but the spoken Slavic language of a humble person, preserved phonetically in writing. ---

- The Structural Evidence: Invariant Slavic Morphology My hypothesis is supported by a structural consistency that transcends context (tested across 30 folios). Such stability is impossible in a random cipher. The entire text is built upon three invariant word-final suffixes, which define the grammatical role of the preceding root: EVA Suffix | Systemic Function | Likely Slavic Phonetic Reading | Example (Functional Reading) -ain | NOUN (Object/Substance) | -an / -yn | qokain (Root/Decoction/Thing) -edy | ADJECTIVE (Property/Condition) | -edy / -y | shedy (Dry/Astringent) -al | IMPERATIVE VERB (Command/Action) | -aj / -aj | qokal (Execute/Boil completely) Furthermore, the roots of VMS words, when decoded phonetically, frequently correspond to Old Slavic terms related to herbalism and daily life. ---

I don’t claim to have solved the mystery. All I ask is that others test this morphological stability on their own computers, read the words as they sound, and try to think not as a modern digital person, but as someone who simply tried to survive, feed his family, and record what he knew about the world around him. That humble perspective might be the key we’ve all overlooked. Thank you for your time and for keeping this discussion alive. — Majo SK

|

|

|

| A question about foldouts |

|

Posted by: Mauro - 05-11-2025, 03:48 PM - Forum: Physical material

- Replies (1)

|

|

I have always supposed that the foldouts are made of a single piece of vellum cut at a longer length than usual (and a greater height too, in the case of the rosettes page). Is this true?

|

|

|

| Opinions on: line as a functional unit |

|

Posted by: Kaybo - 05-11-2025, 01:56 AM - Forum: Analysis of the text

- Replies (129)

|

|

As a noob I looked at the manuscript and found some words that are nearly only exclusive at the line start like dshedy, ycheor, ycheol, dchedy, ychain. I also found some nearly exclusive at the line end like chary, opam, orom, okam (interestingly some of these in front of line cuts by plants), but I need to look at that in more detail to say something.

However, the line start words seem very convincing for me. Also this words do not include the paragraph words, so they are not at the start of a paragraph. Also other have found similar patterns bevor You are not allowed to view links. Register or Login to view.

How can that be explained? Is every line the start of a new sentence? But how to fill the line that you have such a smooth ending? Or does the text maybe contain filler words at the start and the end? Or words that starts the coding of a line?

What are your thoughts and ideas about that?

|

|

|

|